2026 Guide to Automated Visual Inspection Systems

Advancements in artificial intelligence have made automated visual inspection significantly more accessible and dependable for pharmaceutical manufacturers and other industries with stringent quality standards. Traditional manual inspection, by comparison, faces well-known limitations. Human inspectors experience fatigue, and performance can vary widely from one person to the next — one operator may consistently catch the smallest anomalies while another may overlook critical defects.

These improvements in AI-driven inspection technology couldn’t arrive at a more critical moment. Manufacturers are bracing for new challenges in 2026. Tariffs, for example, have increased production costs and pushed companies like Merck, Pfizer, and Eli Lilly to relocate operations within the United States to avoid substantial penalties. At the same time, the rising cost of manual labor has made automated inspection solutions more appealing and economically viable.

This article examines the latest innovations in automated vision and inspection and explains how these technologies can help manufacturers improve product quality, reduce operational costs, and enhance throughput, all while maintaining strict regulatory compliance.

TL;DR

Automated visual inspection is becoming essential as manufacturers face rising costs, stricter regulations, and growing production demands. Traditional manual and semi-automated methods struggle with fatigue, variability, and limited throughput, while modern AI-driven systems deliver consistent, high-accuracy inspection across a wider range of products. Advances in imaging, lighting, and machine learning (especially unsupervised models) have dramatically reduced false rejects, accelerated recipe creation, and improved qualification success. Compact systems like the DAI-50 make automation accessible even for low-volume, difficult-to-inspect products. Overall, these technologies enhance product quality, reduce operational burden, and provide a scalable path forward for 2026 and beyond.

What Are Automated Visual Inspection Systems?

Automated visual inspection systems use a combination of cameras, sensors, and in some cases artificial intelligence to evaluate products automatically. These systems can capture hundreds of images of a single unit and perform hundreds of thousands of machine learning inferences within milliseconds to determine whether it is defect free and meets quality standards.

In pharmaceutical manufacturing, there are three primary categories of defects that matter most: critical, major, and minor. Modern inspection platforms can accurately detect defects across all categories with near perfect precision with some systems also possessing the ability to categorize defects.

Beyond visual inspection, some automated systems incorporate additional sensors to assess container closure integrity for vials and other sterile products. These sensors use vacuum decay to identify extremely small, otherwise invisible leaks that could compromise product sterility.

The Evolution from Manual to Automated Visual Inspection

Manual Visual Inspection (MVI)

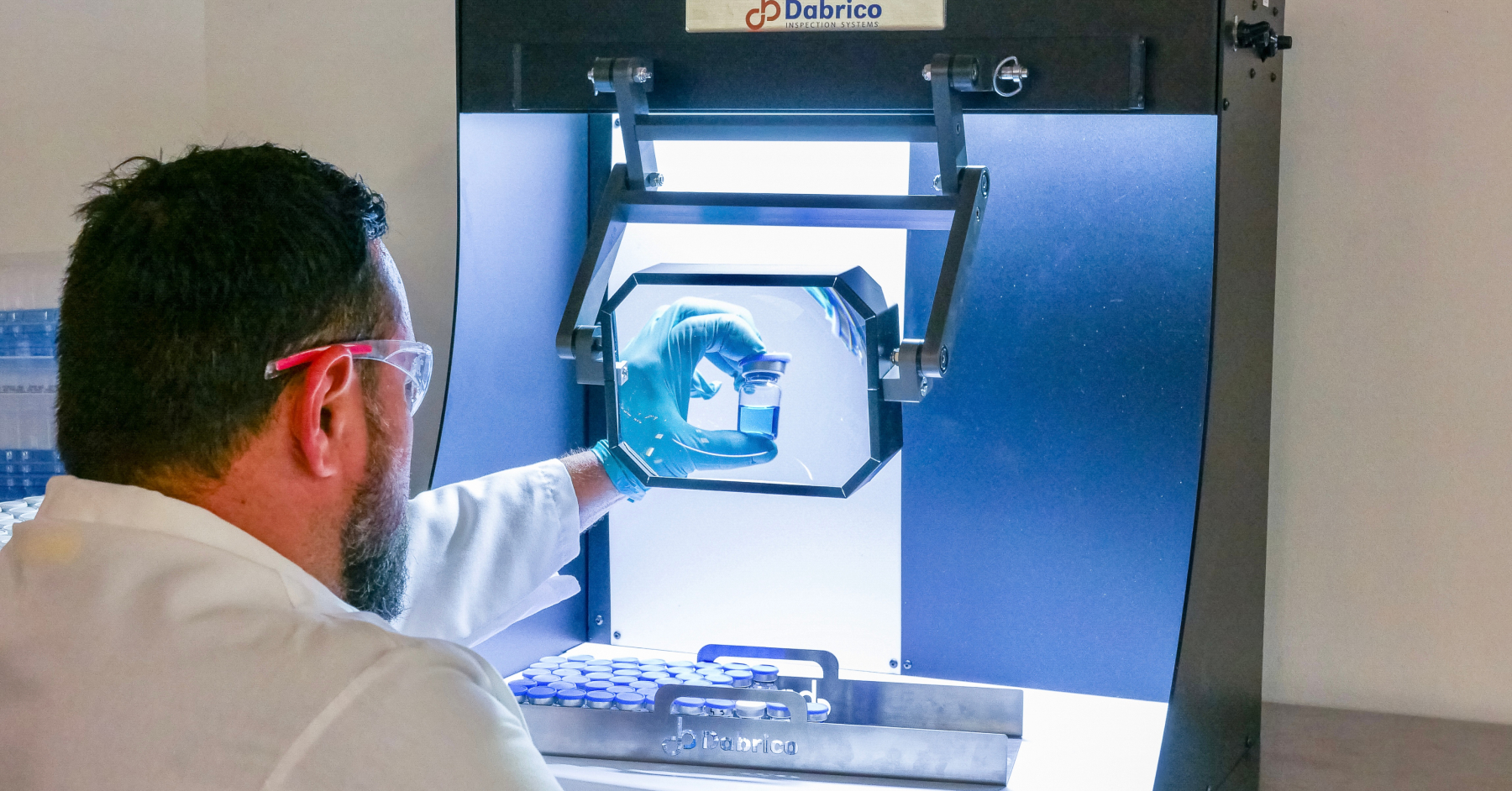

Manual inspection has been used for centuries and remains the benchmark in pharmaceutical manufacturing. In fact, to qualify an automated inspection system, the machine must pass a Knapp test, demonstrating that its accuracy is equal to or greater than that of a trained and qualified human inspector.

Manual inspection requires evaluating one unit at a time, typically against alternating black and white backgrounds. This technique, known as background contrast inspection, helps highlight different types of defects by improving visibility of particles, cracks, and cosmetic anomalies.

Despite its long-standing use, manual inspection presents several limitations, including:

- Operator fatigue, which leads to declining accuracy over time

- High variability between inspectors, even when fully trained

- Slow throughput, making it difficult to scale

- Higher labor costs, especially for operations requiring large teams

- Inconsistent defect detection, particularly for subtle or intermittent issues

Semi-Automated Visual Inspection (SAVI)

Semi-automated visual inspection combines the speed and consistency of automation with the flexibility and judgment of a human inspector. These systems typically operate at 10 to 30 units per minute, offering higher throughput than fully manual inspection while still relying on an operator for the final decision.

In a typical setup, units are delivered via accumulation tables and move from left to right through the machine. Each vial undergoes a pre-spin, creating a vortex that helps mobilize and reveal any particulate that may be present. The vial then pauses in front of the human inspector, who evaluates it visually — often using magnification to enhance the ability to detect subtle defects. When a defect is identified, the system records the observation, and an automated rejection mechanism removes the flagged unit from the line.

SAVI systems are especially common for products that are difficult to inspect, including:

- Lyophilized cakes

- Suspensions

- Molded-glass containers

These formats often present unique detection challenges that benefit from human interpretation.

However, SAVI systems face many of the same limitations as manual inspection. Operator fatigue, variability in judgment, and the inherent subjectivity of human evaluation still apply — and in some cases, these challenges are amplified by the higher operating speeds characteristic of semi-automated environments.

Automated Visual Inspection (AVI)

Traditionally, automated visual inspection systems have been used for products manufactured in very large volumes, often exceeding one million units per year. These products are typically clear liquids, which are well suited for high-speed, camera-based inspection.

Automated systems may use up to 24 cameras to evaluate a single unit from multiple angles. As with SAVI systems, a pre-spin is applied to mobilize particulate, allowing the cameras to capture defects more effectively. Conventional automated inspection relies heavily on traditional vision techniques, such as image subtraction, to detect anomalies.

More recent systems, however, incorporate artificial intelligence to enhance detection accuracy and adaptability. These AI-enabled platforms can learn complex defect patterns, reduce false rejects, and improve performance on products that would otherwise challenge traditional algorithms.

Historically, manufacturers adopted automated inspection primarily for the speed it provides. Many systems are capable of inspecting well over 100 units per minute, delivering significant throughput advantages. But advancements in AI are expanding the use cases beyond just high-volume, clear-liquid products. Modern AI-driven systems can now effectively inspect difficult-to-evaluate formats that once required human inspectors.

The DAI-50 is a strong example of this evolution. Using unsupervised machine learning, it delivers human-like accuracy while maintaining the reliability, speed, and consistency of a fully automated system.

Continue Reading: Top 8 Best Automatic Inspection Equipment for Pharmaceutical Products

How Automated Visual Inspection Systems Capture the Perfect Image

For an automated visual inspection system to consistently detect extremely small particles, it must excel at two fundamental tasks. First, it must capture an image in which the particle is clearly visible. Second, it must analyze that image to determine whether the particle represents normal variation or a true defect.

Achieving a perfect, repeatable image — millions of times over — requires precise control of the inspection environment. Automated systems rely on a coordinated combination of cameras, specialized lighting, and mechanical automation to position each unit correctly, illuminate the product in a way that reveals potential defects, and capture high-quality images at exactly the right moment.

This controlled process ensures that even subtle anomalies are consistently visible, enabling reliable and accurate inspection across every unit that passes through the system.

Step 1: Positioning the product

Just as hitting a home run starts with the right pitch, capturing the perfect inspection image begins with placing the product in exactly the right position. Proper positioning is one of the most critical steps in automated visual inspection.

Many defects in pharmaceutical vials and syringes are not immediately visible. A hair may cling to the underside of a stopper, or a tiny metal fragment may rest motionless at the bottom of a syringe. To reveal these hidden particulates, the system must move the product in a way that brings defects into view.

This is achieved through a process called pre-spin. By rapidly rotating the vial, the system creates a vortex inside the solution. The swirling motion lifts and dislodges particulate, suspending it long enough for cameras to capture a clear image.

Some defects are heavier or more difficult to mobilize than others, requiring more aggressive pre-spin. The optimal pre-spin profile depends on factors such as the type of defects typically encountered and the size and geometry of the container.

Effective positioning — combined with the right pre-spin — sets the stage for accurate, reliable inspection across millions of units.

Step 2: Perfecting the lighting

Once the vial is positioned correctly for inspection, the next challenge is ensuring every potential defect becomes visible — and this depends entirely on lighting. Automated visual inspection systems rely on precision-engineered lighting environments to reveal subtle imperfections that might otherwise blend into the natural variability of glass, solution, and product.

Different lighting techniques are used to highlight different classes of defects:

- Backlighting creates strong contrast, making floating particulate appear as distinct silhouettes.

- Dark-field illumination exposes scratches, cracks, and bubbles by capturing light that scatters off sharp or irregular surfaces.

- Diffuse lighting minimizes glare on curved glass, allowing cosmetic defects to stand out without interference from reflections.

For difficult-to-inspect formats — such as molded glass, lyophilized powders, or cloudy suspensions — the system further optimizes lighting intensity, angle, and spectrum to prevent washout and ensure defects do not disappear into visual noise.

Ultimately, the purpose of lighting is the same as positioning: to create a perfect, repeatable image millions of times. This gives the inspection system — whether a traditional AVI platform or an AI-driven solution like the DAI-50 powered by AVIS — the clarity it needs to distinguish normal product variation from true anomalies.

Step 3: Capturing the perfect image

Once the product is properly positioned and illuminated to reveal even the most subtle defects, the final step is capturing a high-quality image the inspection system can reliably analyze. In automated visual inspection, this requires precisely synchronized cameras — often multiple viewpoints operating at high frame rates — to freeze motion and expose particles, cracks, or cosmetic anomalies with maximum clarity.

To achieve this, the system must balance sharpness, exposure, contrast, and timing so defects are neither blurred by movement nor lost in glare or noise. Even small inconsistencies in imaging can lead to false rejects or, worse, missed defects. For this reason, modern AVI platforms maintain strict control over every imaging variable, from camera calibration to shutter synchronization.

In the DAI-50, for example, AVIS captures hundreds of frames per vial from top, side, and bottom perspectives, ensuring every surface and every potential defect is imaged under optimal conditions. This produces a clean, crisp, and highly repeatable visual record of each unit.

Capturing the perfect image isn’t just another step in the inspection workflow — it is the foundation upon which accurate, automated quality decisions are made.

Software Used In Automated Visual Inspection

Automated visual inspection systems generally rely on three main categories of techniques. Traditional computer vision uses rule-based methods such as image subtraction to detect changes or anomalies between frames. Neural networks use labeled examples of defects to learn patterns and identify images that appear similar to known defective cases. Unsupervised machine learning, by contrast, is trained only on pre-inspected, compliant product and learns what “normal” looks like, allowing it to flag anything that deviates from that standard as a potential defect.

Traditional Computer Vision

Traditional computer vision refers to rule-based, algorithmic methods that extract and interpret visual information using hand-crafted features rather than learned patterns. These techniques rely on well-understood mathematical operations such as edge detection, thresholding, geometric transforms, and feature descriptors like SIFT, SURF, or FAST. Because they follow explicit rules, they offer transparency and predictable behavior, making it easier for engineers to understand why an algorithm succeeds or fails. Traditional computer vision is efficient, lightweight, and suitable for applications where constraints on computing power, data availability, or latency make deep learning impractical. It’s often ideal for simpler, highly structured tasks — for example, detecting color differences, locating corners, or identifying geometric shapes — and continues to play a valuable role in hybrid systems that pair classic feature extraction with modern machine-learning models.

Neural Networks

Neural networks are computational models inspired by the way the human brain processes information, using layers of interconnected “neurons” to learn meaningful patterns from data. Instead of relying on hand-crafted rules, neural networks automatically discover features by adjusting their internal weights during training, allowing them to recognize complex relationships in images, signals, or text. In computer vision, deep neural networks — especially convolutional neural networks — have transformed tasks like classification, detection, and segmentation by delivering far higher accuracy than traditional methods, particularly when large, diverse datasets are available. Their ability to learn hierarchical features enables them to generalize across varied inputs, though this power comes with tradeoffs such as high computational demand and the need for extensive training data. Still, neural networks remain the backbone of modern AI systems, driving rapid advancements in automation, perception, and intelligent decision-making.

Unsupervised Machine Learning

Unsupervised machine learning provides a powerful foundation for visual inspection by learning directly from the natural variation present in compliant product units. Instead of relying on large, labeled defect libraries, unsupervised models analyze hundreds of examples of “normal” product and construct a multidimensional representation of expected appearance patterns. During inspection, the system compares each new unit to this learned representation and flags deviations as potential anomalies. This approach is especially effective in environments where defect types are unpredictable, rare, or difficult to reproduce—conditions common in pharmaceutical manufacturing. Because the model is trained only on compliant units, it can detect both known and previously unseen defects, overcoming the inherent limitations of supervised learning systems that can only recognize patterns they have been explicitly taught.

By focusing on modeling normal variation rather than memorizing defect categories, unsupervised machine learning delivers more robust anomaly detection, reduces dependence on exhaustive labeling, and adapts more naturally to the complex, heterogeneous characteristics of real production environments.

Traceability and Audit Trails

Traceability and audit trails are critical elements of computerised visual inspection systems used in GMP environments. The system must generate secure, time-stamped audit trails that automatically capture changes to inspection parameters, system configurations, and user access. Each entry should identify the user, the action taken, and the data before and after the change, allowing full reconstruction of system activities during operation or investigation. Traceability also applies to inspection results, ensuring each container can be linked to the specific equipment settings and decision logic in place at the time of inspection. These controls support data integrity and provide documented evidence that the system operates consistently within its validated state.

How to Create a New Inspection Recipe using an AVI System

There are four core steps involved in creating a new inspection recipe: record, configure, train, and run. The way these steps are executed varies significantly depending on the inspection technique being used. Some traditional visual inspection methods can require months to develop and validate a new recipe, while more modern approaches can complete the process in a matter of minutes. Choosing the right technique for your specific use case is essential to ensuring a strong return on your investment.

Step 1: Data Collection

The first step is to collect data. This is the foundation of the respiration process as it is what is is what will be used to train the model itself. There is an expression in the artificial intelligence community garbage in garbage out. In a lot of ways, the same is true with automated visual inspection systems. The better data you feed the system, the better output you’ll get in return. Quantifiably, this will show up with its accuracy and false eject rate.

For a neural network based approach, data collection is a very extensive exercise. Often times it requires thousands of defects that are labeled for each specific defect type. This process can take several months to even in some cases several years.

For unsupervised machine learning, data collection looks very different. Since an unsupervised machine learning model doesn’t use labeled defects, only pre-inspected to defect free units can be used. data collection in this case is as simple as collecting 500 units of product that have been pre-inspected and and that are known to have no defects. Right away at the start you can see the difference between these two approaches and the complex city that’s required.

Step 2: Configure the Model

Once the data set has been collected, the next step is to configure the model. In this step, The user is helping to guide and direct the model as to where it should focus its efforts during the training phase and subsequently in the detection phase. Often, often, regions of interest or ROI’s are used during this step. The ROIs are a way to call out the specific areas of a product that are more or prone to defect.

In the DAI 50, for example, each ROI that is designated equates to a new machine learning model that will be used. So for a vial that has five defined ROI, there will be five machine learning models that that will be trained. The benefit of this approach is that it yields a higher level of accuracy and control over or how the model is being configured.

Step 3: Train the Model

Now that all the data has been collected and the parameters for the model’s focus have been orientated, we can now move into the training phase. During the training phase, the model uses the data set that was collected in step one. and the regions of interest that were highlighted in step two to create a model …that seeks to understand… What it should flag as being an anomaly.

With a neural network based approach, the model is trying to understand what defects look like. since that is what was collected during the data collection phase. By learning what previous defects look like, it can then detect future defects. Challenges, though, with this approach is that if a new defect were to be introduced in production, that would produce complications for the system.

Unsupervised mission. their new none the other hand seeks to understand what normal looks like for the product. This is the exact opposite to that of a neural network. That’s trying to understand what the abnormal is. In a way this unsupervised machine learning approach is a lot more like how a human and identifies defects. We do it by comparing what we see to what we would expect to see with something that’s normal.

Step 4: Run the Model

Finally, once the model has been trained, it is ready to be put into operation. This typically begins with an initial offline production run to evaluate how the model performs on live, uninspected units. Based on the results, the model may either proceed toward qualification for inline use or require one or more steps in the process to be repeated and refined.

False Ejects and Other Common Challenges of Automated Inspection Systems

Automated inspection systems, when properly trained and integrated, are exceptionally powerful tools. However, challenges can arise when a system is misconfigured or when the wrong technology is selected for a specific inspection problem. These shortcomings are a key reason why many manufacturers continue to rely on semi-automated visual inspection systems for difficult-to-inspect products instead of fully automated alternatives.

Challenge 1: False Ejects

By far the most significant challenge users face with automated visual inspection systems is their high false reject rate. It is not uncommon for an AVI system to reject more than 10 percent of inspected units unnecessarily. In extreme cases, we have seen manufacturers operating systems with false reject rates exceeding 30 percent — and these systems were still being used in production.

High false rejects create multiple issues. They result in wasted product and lost revenue, and they also raise regulatory concerns by calling into question the reliability and validity of the inspection method itself.

These challenges are most often associated with traditional computer vision and supervised neural network approaches. Because these systems are trained only on examples of defective units, they are typically configured with very aggressive reject thresholds. Anything that looks even remotely similar to a known defect is likely to be rejected.

A common example involves products that naturally produce bubbles. Under certain lighting conditions, a bubble can appear nearly identical to a piece of floating particulate. To a neural network trained on defect images, the distinction between a bubble and a fragment of glass can be extremely difficult — often impossible — to make with confidence, leading to unnecessary rejects.

Unsupervised machine learning, on the other hand, tends to deliver significantly lower false reject rates. These systems are trained exclusively on compliant product, learning the full range of normal variation before identifying anything that deviates from that norm. As a result, it is far more common to see false reject rates closer to one percent or less, because the model evaluates abnormalities relative to what is truly normal rather than what is merely similar to known defects.

Challenge 2: Qualification

For an automated visual inspection system to be qualified, it must pass a Knapp test, demonstrating that its performance is equal to or better than that of a trained human inspector. For some systems, this qualification step can be extremely challenging — particularly when the product itself exhibits a high degree of natural variation.

In our experience, we’ve seen numerous manufacturers invest in multi-million-dollar AVI systems only to have them sit unused on the production floor. The primary reason is the difficulty of qualifying the system and the extensive effort required to develop a reliable inspection recipe. When a system cannot meet Knapp test criteria or the recipe creation process becomes overly burdensome, the equipment often never reaches full production use.

This is yet another example highlighting the value of a simpler, more flexible AI-based approach, where recipe creation is faster and qualification becomes far more straightforward and seamless.

Challenge 3: Flexibility

Most manufacturers produce a wide range of products in medium to low volumes, which creates significant challenges for traditional automated visual inspection systems. These systems are often as large as a small school bus and contain hundreds of mechanical components that must be adjusted or replaced when switching from one product SKU to another. Even seemingly small changes — such as moving from a 5 mL vial to a 10 mL vial — can require days of reconfiguration.

Smaller, simpler, and more compact systems like the DA50, however, can be reconfigured for new product types in a matter of minutes. This level of flexibility is especially valuable for CMOs, 503B outsourcing facilities, and compounding pharmacies, all of which are responsible for producing a wide variety of products and cannot afford lengthy changeover times.

Methods for Deploy Automated Inspection In Production

End-of-line Inspection

The United States Pharmacopeia (USP) requires 100 percent visual inspection of all pharmaceutical products. As a result, the most common application for automated visual inspection systems is end-of-line inspection. End-of-line refers to the stage where the product has completed the manufacturing process but has not yet been labeled or packaged, ensuring that no obstructions interfere with the inspection.

Digital inspection machines used for end-of-line inspection are often integrated inline, allowing the entire manufacturing process to flow seamlessly from one stage to the next. This is typically achieved through a coordinated system of conveyor belts, accumulation tables, and turntables, ensuring safe and continuous product movement.

In some facilities, however, end-of-line inspection is performed offline, with products manually transported from one area to another. This is especially common in environments with limited floor space or legacy layouts that do not support inline integration.

One advantage of using smaller, more compact automated visual inspection systems is that they make inline inspection far more feasible, even in space-constrained facilities. This allows manufacturers to streamline production flow, reduce manual handling, and create a smoother transition from one stage of manufacturing to the next.

Upstream Inspection

The second most common deployment of automated visual inspection is upstream inspection, where units are inspected before filling or, in some cases, immediately after filling but before capping. The primary benefit of upstream inspection is that it enables manufacturers to detect defects earlier in the production process, rather than waiting until the end of the line. This is especially valuable for large-volume production runs, where visual inspection often lags behind initial manufacturing output.

Consider a scenario in which a facility produces 10,000 vaccine vials per day, but visual inspection is performed in a separate room and occurs only every other day. If the automated inspection system identifies a spike in defects, the production team may not discover the issue until several days later — after tens of thousands of additional units have been produced. Upstream inspection closes this gap by providing near real-time insight into product quality, allowing teams to address issues immediately and prevent large-scale scrap or rework.

Another advantage of upstream inspection is the ability to inspect the top region of the vial before the stopper and cap are applied. Once capped, a portion of the vial becomes obstructed, making certain defects difficult or impossible to detect. Inspecting after filling but before capping provides much clearer visibility into these areas, improving overall defect detection coverage.

Secondary Inspection

Secondary inspection — sometimes referred to as 200 percent visual inspection — is the practice of re-inspecting units that have already gone through a primary inspection process. This approach is commonly used when a primary AVI system has a high false reject rate, requiring a second method to verify whether ejected units are truly defective.

In many facilities, semi-automated visual inspection systems are used to manually inspect the rejects from an automated system. Other manufacturers address this challenge by deploying a second automated visual inspection system dedicated specifically to reinspecting ejected units.

For example, a compact, medium-speed AVI such as the DA50 can be used to reinspect rejects coming from a high-speed AVI that processes up to 600 units per minute during primary inspection. This secondary step helps reduce unnecessary product loss and improves confidence in the overall inspection process.

Visual Inspection Machines for Injectable Products

Automated visual inspection machines for injectable products are significantly different from those used for capsules or other solid dosage forms. Inspection systems designed for injectables are typically far more sophisticated, requiring more cameras, more advanced automation, and more intelligent software to achieve the necessary level of precision. Injectable products carry a higher health risk for patients, and the types of defects they are prone to — such as subvisible particulate, cracks, stopper issues, or fill-level anomalies — are often more challenging to detect. As a result, injectable inspection systems must

Frequently Asked Questions

1. What types of defects can an automated visual inspection machine detect in pharmaceutical products?

Automated visual inspection systems are designed to detect the three major defect classes: product defects (e.g., particulate, color changes, fill level), container defects (e.g., cracks, chips, inclusions), and closure/assembly defects (e.g., stopper, seal, or cap issues). Systems like the DAI-50 use multiple cameras, engineered lighting, and AI-based analysis to identify anomalies across the vial body, base, and closure so each unit can be released with confidence.

2. Is an automated system like the DAI-50 only for high-volume, clear-liquid products?

Historically, AVI was reserved for large, high-volume batches of clear liquid in ideal containers. The DAI-50 is different: it’s built specifically for difficult-to-inspect products (like molded glass, lyophilized cakes, suspensions, cloudy liquids) and high-mix, low-volume production where traditional high-speed AVIs don’t pencil out. That makes it a strong fit for CMOs, 503B outsourcing facilities, and compounding pharmacies that run many SKUs at smaller batch sizes.

3. How does the DAI-50 compare to manual and semi-automated inspection in accuracy and consistency?

Manual and SAVI inspection depend heavily on the individual inspector—accuracy drops with fatigue, turnover is high, and performance can vary dramatically from person to person. The DAI-50, powered by AVIS, uses unsupervised machine learning to learn “normal variation” from compliant units and then flags anything outside that pattern, delivering human-like accuracy with machine-level consistency. In head-to-head work, this approach has reduced falsely accepted defective units and significantly lowered false eject rates compared to traditional AVI and neural-network-based systems.

4. What does it take to create and qualify a new inspection recipe?

With AVIS on the DAI-50, creating a new recipe typically follows four steps: record ~500 pre-inspected compliant units, configure regions of interest (ROIs) for each camera view, train the model (usually in minutes), and run the recipe in production. Because AVIS learns from normal product variation instead of large labeled defect libraries, recipe development is dramatically faster and simpler than supervised neural-network approaches—and the trained recipe is “frozen,” making it validation-ready for IQ/OQ/PQ and Knapp studies.

5. Will implementing the DAI-50 replace our human inspectors?

The DAI-50 is designed to elevate human inspectors, not erase them. AI handles the monotonous, high-volume inspection task, while people focus on higher-value work like managing recipes, investigating rejects, handling deviations, and improving processes. Many sites use automation to redeploy inspectors into roles that better use their expertise, while also reducing the staffing, training, and turnover burden that comes with purely manual or SAVI inspection.

Conclusion

Automated visual inspection has advanced rapidly, offering manufacturers a powerful and reliable path to higher quality, lower costs, and greater operational agility. By combining precise imaging, optimized lighting, and intelligent AI-driven analysis, modern systems overcome the long-standing limitations of manual and semi-automated inspection while adapting to a broader range of products than ever before. As regulatory expectations rise and production pressures intensify, these technologies provide a scalable, future-ready foundation that helps manufacturers protect patient safety, streamline workflows, and confidently meet the demands of an evolving industry.